Mastery Cheat Sheet for Instructional Designers

Building agents

Start from a real call, not a persona document

The most effective agents come from actual customer calls — specifically the hard ones. Upload a call where the rep lost momentum, where a new objection surfaced, or where the buyer was unusually resistant. Outdoo generates an agent from that interaction. Reps practicing against it are rehearsing for the actual situation, not a textbook version of it.

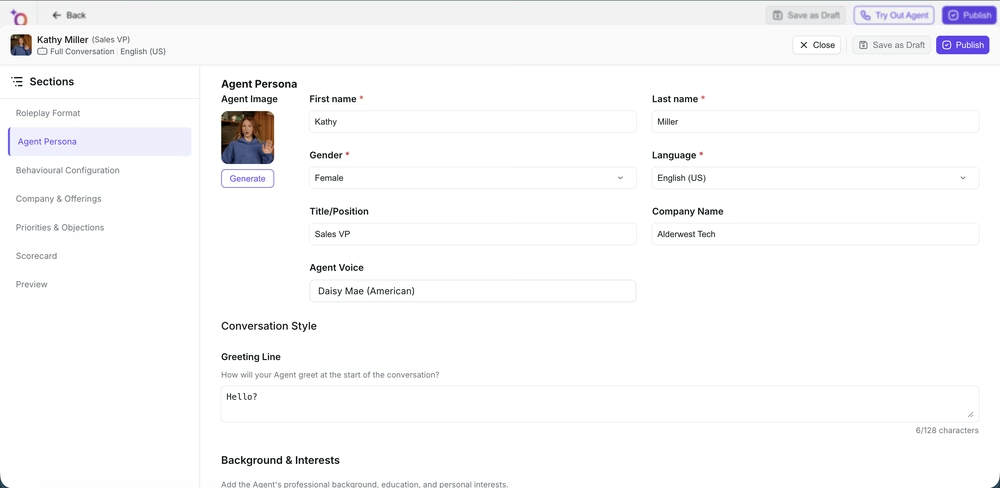

Write persona descriptions that constrain the AI specifically

"Skeptical VP" is not a persona — it is an adjective. A useful persona specifies role, seniority, company context, emotional starting state, what the buyer knows, what they do not know, which objections they will raise and in what order, and what would make them more open. Vague personas produce inconsistent agents. The more specific the description, the more reliably the agent behaves across multiple rep sessions.

Distinguish persona from scenario

The persona describes who the buyer is. The scenario describes where they are in their journey — actively evaluating, not aware they have a problem, currently using a competitor, recently had a bad experience with a vendor. A VP of Sales who is actively evaluating tools behaves completely differently from a VP of Sales who does not know your product exists. Both fields are required for a realistic simulation.

Use behavior settings to control difficulty and realism

Behavior settings let you control how the agent responds to rep actions: resistance to pivots, whether the buyer volunteers information or waits to be asked, how quickly they reveal objections, and what triggers their openness. These are the settings that distinguish a realistic agent from a predictable one. Tune difficulty to match the rep's current level — start accessible and increase as skills develop.

Set the first interaction flag correctly on your Roleplay Types

If you are building agents for cold calls or initial discovery, enable the first interaction toggle on the Roleplay Type. This configures the AI buyer to start with no relationship context, appropriate skepticism for cold contact, and opening-exchange behavior. Using a follow-up-configured agent for cold call training will not prepare reps for the resistance they will actually face.

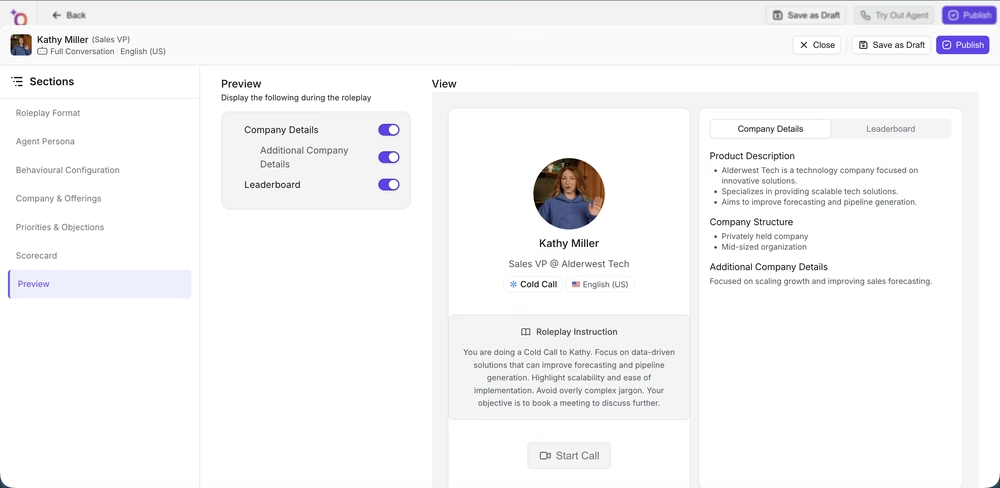

Always preview before publishing

Run the agent yourself before assigning it to any rep. Test specifically: does the AI stay in persona under pressure? Does it raise the objections you configured? Does it respond appropriately when the rep gives a genuinely good answer? An agent that behaves inconsistently in preview will behave inconsistently in practice sessions, and reps will stop trusting the feedback.

Designing scorecards

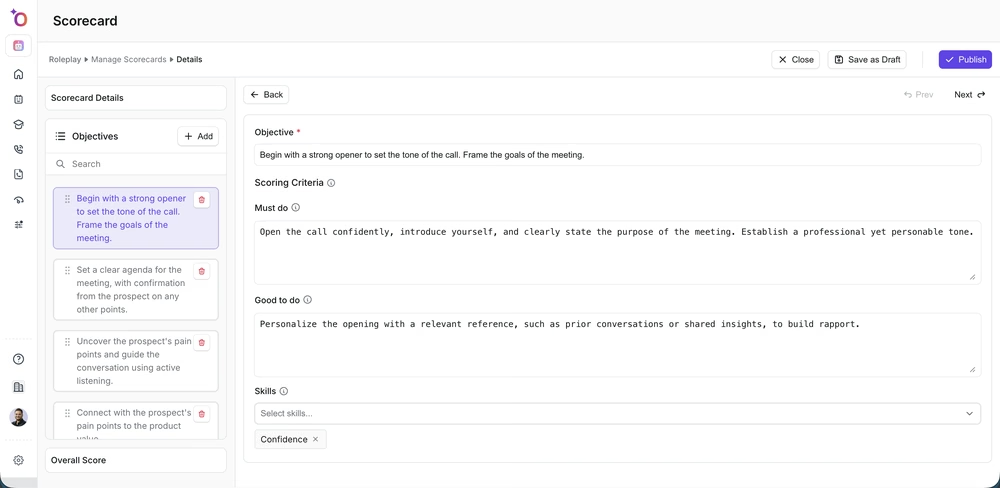

Score behavior, not outcomes

"Did the rep set a next step?" is an outcome you can see in the CRM. "Did the rep confirm the next step using the buyer's own calendar availability and get a verbal commitment?" is a behavior. Scoring behaviors gives you the cause; scoring outcomes gives you a result you already knew. Every criterion on your scorecard should describe something observable in the transcript, not something you infer from what happened next.

Every criterion maps to a specific playbook line

Before writing a scorecard criterion, find the corresponding line in your sales playbook or call guide. If you cannot point to a specific documented behavior the rep is expected to demonstrate, the criterion does not belong on the scorecard. Scorecards should be a machine-readable version of your playbook — not a list of general sales skills.

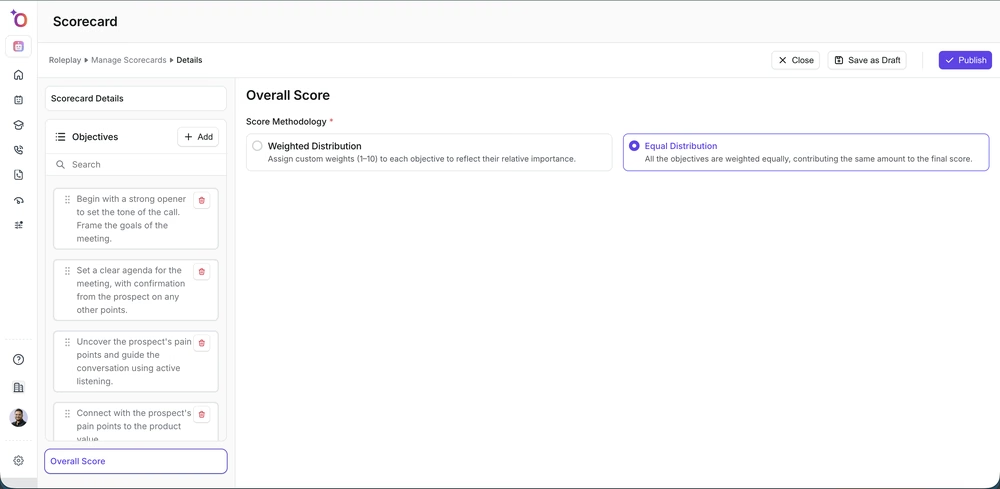

Use distribution weighting to reflect real priorities

Not all criteria carry equal weight. A rep who nails discovery but stumbles on the next-steps ask is in a different position than one who does the reverse. Configure point distribution to reflect actual priorities. A criterion worth 5% of the score will not change coaching behavior. If something matters, weight it accordingly.

Use the same scorecard for roleplay and live calls

Outdoo applies your scorecard to both practice and real conversations. This consistency is the feature that makes training data meaningful — you can compare a rep's discovery score in roleplay with their discovery score on real calls. Different scorecards for practice and live breaks this comparison and removes the signal you need to measure transfer.

Designing simulations

Document the correct path before opening the builder

Walk through the real workflow yourself and write down every step — every field, every click, every screen transition — before configuring the simulation. The most common source of simulation data quality problems is an ambiguous correct path. If you are not certain what the correct sequence is, the simulation will teach the wrong thing.

Score mandatory fields at higher weight than optional ones

In simulation scoring, a missed required field that causes a compliance issue or breaks a downstream process deserves more weight than an optional field left blank. Configure scoring weights to reflect business risk. Uniform weighting teaches reps that all steps are equally important — which is not true and is not what you want them to internalize.

Keep each simulation to a single workflow

One simulation per workflow. A 20-step simulation covering the entire post-call process gives you aggregate accuracy. A 6-step simulation covering just the opportunity stage update gives you specific, actionable data on exactly where errors happen. Build small and specific. You can always add more simulations as you identify new training gaps.

Test for skipped steps, not just wrong steps

The most common mistakes in post-call workflows are not doing the wrong thing — they are not doing a required thing at all. When configuring scoring, explicitly flag steps where omission is the likely failure mode (required fields, mandatory disposition selection). The scoring system will not automatically catch what was skipped unless you configure it to.

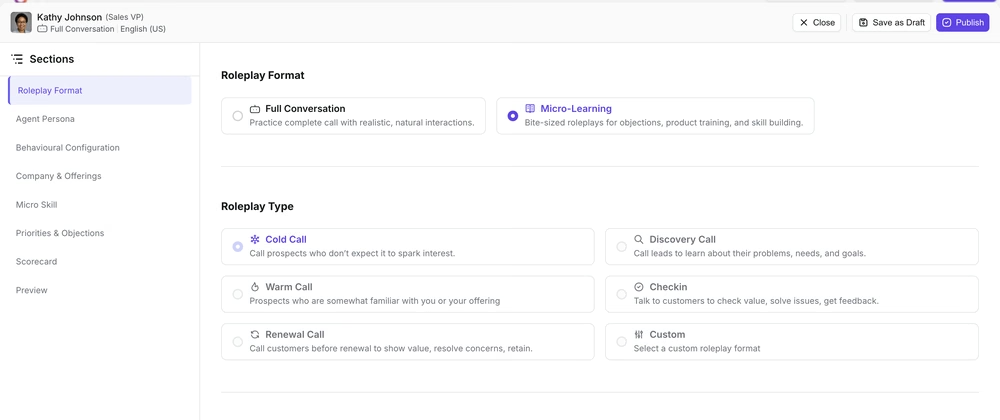

Designing micro-learning

Use micro-learning for reinforcement, not initial learning

Micro-learning agents drop reps into a single moment — the pricing objection, the next-steps ask, the competitive pivot — and score them on just that moment. They are not replacements for full-call roleplay. Use them after a full training program to maintain and sharpen specific skills, or during a call blitz to drill one skill fast.

Keep micro-learning agents under 3 minutes

A micro-learning agent that runs longer than 3 minutes is a full roleplay with a different name. The value of micro-learning is that it fits into a gap in the day. Design them to start at the relevant moment, cover 2–3 exchanges, and end. If the scenario needs more than that to make sense, it belongs in a standard agent.

Testing and iteration

Run everything yourself before any rep does

Walk through every agent, simulation, and micro-learning module as if you were the learner. For agents: does it feel like the right buyer? For simulations: does the interface match the real system closely enough that practice transfers? You will find design problems in five minutes that would take weeks to surface in rep feedback.

Use first-cohort data to improve design, not just measure performance

The first cohort through any new program surfaces instructional design gaps. A low score on a specific criterion might mean reps lack the skill — or it might mean the agent is not testing for that skill effectively, or the criterion is ambiguously written. Investigate before drawing conclusions. The data tells you something happened; only design review tells you what.

If you need help, contact us at support@outdoo.ai.