How do I know if my training is working?

Most training programs can tell you whether people completed the training. Outdoo can tell you whether it changed anything. This article explains which metrics to look at, what they tell you, and what to do when the numbers say your training is not landing the way you expected.

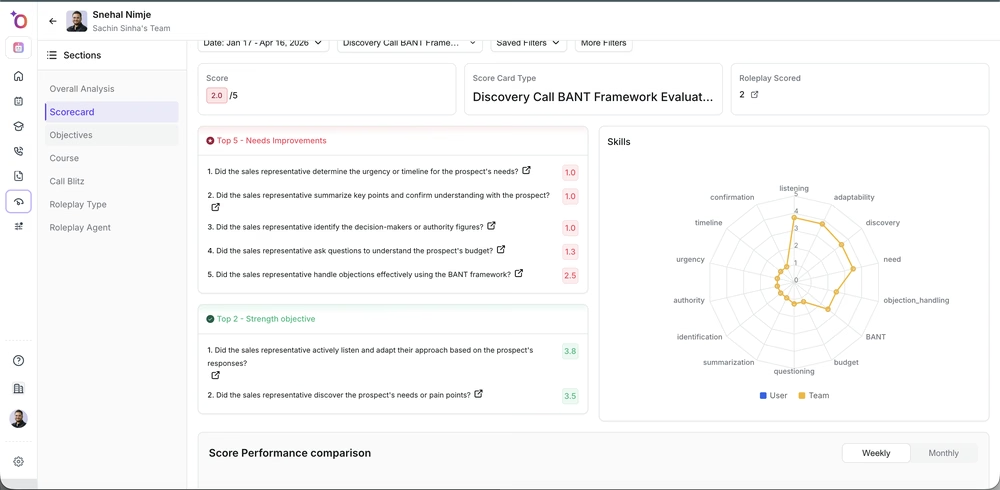

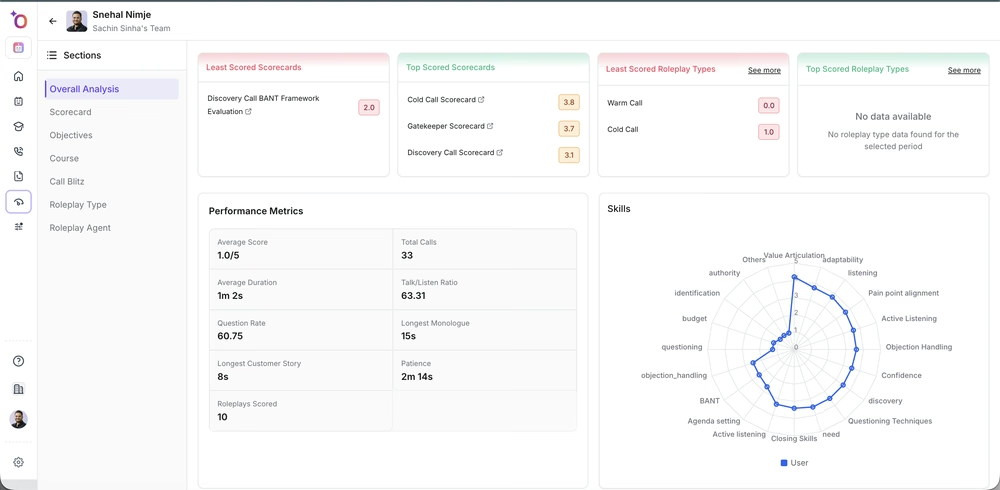

The primary signal: roleplay score vs live call score

The most direct measure of training effectiveness is the comparison between how a rep performs in practice and how they perform in real calls, on the same scorecard criteria.

After a training program or onboarding cohort, pull two numbers for each rep on each criterion you trained:

- Their average roleplay score on that criterion during training

- Their average live call score on the same criterion in the two to three weeks after training ended

A rep who scored 75% on discovery question quality in roleplay and is now scoring 70% on real calls has roughly transferred the skill. A rep who scored 80% in roleplay and is scoring 45% on real calls has not. The gap is the signal.

What a large gap tells you

When roleplay scores are significantly higher than live call scores on the same criteria, there are three likely causes:

- The agents were not realistic enough. Reps got good at handling the practice version of the objection but the real version is harder, faster, or phrased differently.

- The rep needs more practice volume. They understood the concept well enough to score in a controlled session but it is not automatic yet. Repetition — not a new training program — is the fix.

- The environment is different in ways the training did not address. In-person calls versus video calls, inbound versus outbound, new market versus existing segment. If the gap shows up across the team on a specific call type, update the agents to match that context.

A small gap in either direction is normal and expected. A gap larger than 15–20 percentage points on a criterion that was specifically trained is worth investigating.

Scorecard trends over time

Individual post-training comparisons tell you about a single program. Scorecard trends over 8–12 weeks tell you whether your coaching system is working as an ongoing practice.

Navigate to Insights > Team and look at criterion-level scores over time for individual reps. A rep whose discovery score has been flat for eight weeks despite coaching and practice is telling you something — either the coaching is not specific enough, or the agents they are practicing against are not challenging enough to drive improvement.

Simulation accuracy as a leading indicator

For post-call workflows — CRM updates, dispositioning, data entry — simulation accuracy is a strong predictor of live execution quality. If a rep passes the CRM simulation at 90%, they will make few errors in the first weeks. If they passed at 60%, expect data quality issues.

Run this correlation after your first simulation-based onboarding cohort: compare simulation scores against CRM data quality metrics in weeks 1–3. The correlation is typically strong, and it gives you a defensible threshold for what "passing" should mean for your team.

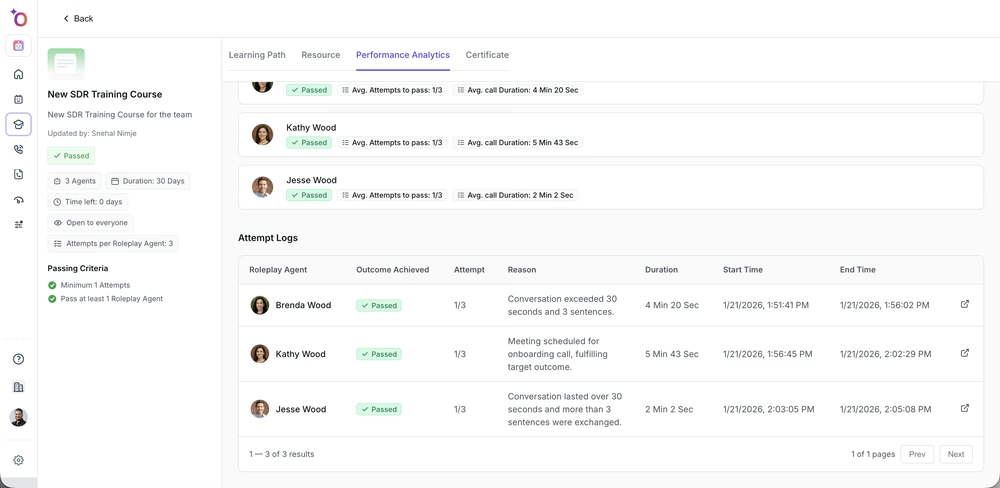

Certification pass rate vs completion rate

Completion rate tells you people went through the training. Pass rate tells you they could demonstrate the skill at the required level. The gap between these two numbers is informative:

- High completion, high pass rate: training is working as designed

- High completion, low pass rate: the training is not preparing people to meet the threshold, or the threshold is calibrated too high — investigate both

- Low completion, high pass rate among completers: an access or assignment problem, not a content problem

Red flags: when training is not working

Some patterns in the data are strong signals that something in your training design or delivery needs to change:

- Roleplay scores have plateaued for multiple reps at the same level on the same criterion. The agents are too easy and are no longer driving improvement.

- Simulation accuracy is high but live CRM errors are still common. The simulation is not replicating the real tool closely enough — update the screenshots and steps.

- Post-training live call scores are lower than pre-training baseline. This usually means reps are overthinking the criteria they were just evaluated on rather than executing naturally. Run a reset session focused on instinct rather than checklist.

- Pass rates drop significantly on the second or third certification in a program. Difficulty is escalating faster than the training is preparing people for it — recalibrate the agent difficulty curve.

Building the habit of checking

Training effectiveness data is only useful if someone looks at it on a regular cadence. Build a monthly or bi-weekly review into your enablement routine:

- Pull the roleplay vs live call comparison for the most recent cohort

- Check criterion trends for reps who completed training 30 and 60 days ago

- Review simulation accuracy against any CRM quality metrics you have available

- Flag any agents that have not been updated in more than 90 days — stale agents produce stale scores

The teams that get the most from Outdoo are not the ones running the most training. They are the ones who check whether the training worked and adjust what they build based on what the data shows.

If you need help, contact us at support@outdoo.ai.